From the people who brought you AFST: The most dangerous "child welfare" algorithm yet

NCCPR Child Welfare Blog

APRIL 3, 2023

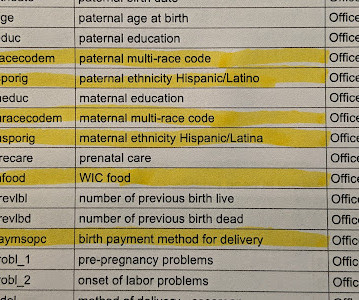

It’s literally computerized racial profiling: race and ethnicity are explicitly used to rate the risk that a child will be harmed. As is so often the case with these algorithms, they are less prediction than self-fulfilling prophecy. . ● There is no opportunity for any family to opt-out or deny the use of their personal data.

Let's personalize your content